10 Developer Tips for Testing and Selecting a Video Call API Solution

If you want to make integrated video calls feel like a native part of your application, here are 10 technical insights to guide you when choosing or evaluating a video call API.

In the initial phase of browsing through video call options, almost every API and SDK looks good enough because you’re likely rendering local streams on a high-speed office Wi-Fi.

However, the real test begins a few months post-deployment, when you're navigating compliance audits and users on legacy devices or joining from less-than-ideal network environments.

Choosing a video call API is a decision that directly affects your product’s usability, reliability, and time to market. So if you need to make integrated video calls feel like a native part of your application, here are 10 technical insights to guide your evaluation.

1. Check if you have the long-term resources to self-host

Self-hosted solutions like Jitsi seem cost-effective at first, with no usage fees and full control. But after the prototype, the maintenance requirements add up quickly.

You’re responsible for securing access, implementing authentication layers, and managing infrastructure like SFUs, signaling, and STUN/TURN across unreliable real-world networks.

This isn’t a one-time setup. It’s ongoing work that involves monitoring, patching, and debugging edge cases. So calculate the total cost of ownership, not just upfront savings. If your team is spending significant time maintaining WebRTC infrastructure, you’re trading licensing savings for engineering time.

💡Tip: Use a managed video call API, like Whereby, to reduce the operational overhead so you can focus on building features that matter.

2. Audit the SDK’s life expectancy and roadmap

One of the primary anxieties for technical leaders is building complex clinical workflows on a video-call API or SDK that will eventually be retired.

Some providers also start by introducing breaking “v2/v3” rewrites or quietly shifting their underlying architecture, which can eventually force you into unexpected migrations.

This risk is higher with platforms where video is just one product in a larger suite. When priorities change, video can fall down the roadmap or even get deprecated. We’ve seen this play out with Twilio’s video product changes, where teams were suddenly pushed to rethink their stack.

💡Tip: Take time to assess the provider’s track record. How do they handle versioning, deprecations, and backward compatibility? APIs that treat video as a core product, like Whereby, tend to offer more stability, so you’re not rebuilding critical parts of your app every year.

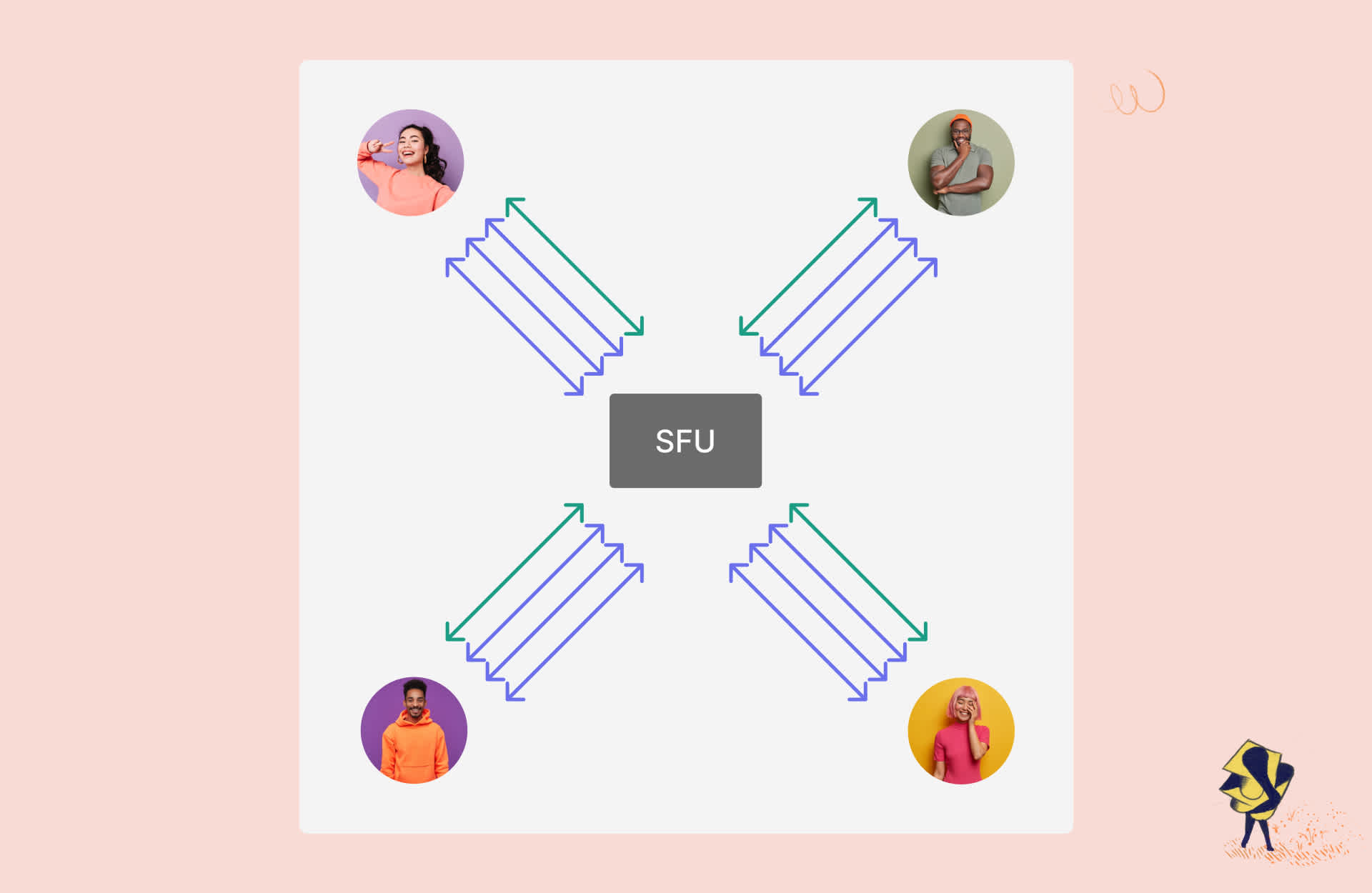

3. Choose the right media architecture: P2P vs SFU vs MCU

The architecture behind your video calls directly impacts scalability, latency, and device performance, so it’s not something to treat as an afterthought.

P2P (Peer-to-Peer): Ideal for 1:1 calls where the participants are in relatively close proximity. It’s direct and supports true end-to-end encryption, but performance can break down fast as more participants are added, since bandwidth and CPU usage grow with each connection.

SFU (Selective Forwarding Unit): The modern default. Instead of every participant sending media directly to everyone else, streams go through a server that forwards them without re-encoding. This keeps latency lower, scales much better for groups, and often performs better even for long-distance 1:1 calls. It’s not end-to-end encrypted, but still secure.

MCU (Multipoint Control Unit): Mixes all participants into a single output stream. That makes things easier for client devices, but adds more server cost and usually more latency because the media has to be transcoded and combined.

![]()

💡Tip: Look for APIs that offer flexibility and support one or more of these architectures. This lets you test and optimize based on your specific use case. In most scenarios, a globally distributed SFU setup will give you the best balance of quality, performance, and scalability. Learn more about it here.

4. Security and compliance aren’t optional

If you’re building for healthcare, fintech, or any regulated space, security is a non-negotiable requirement. You need clear answers on how media is encrypted, where it’s processed, and whether it’s stored at all.

WebRTC gives you encryption in transit by default (DTLS/SRTP), but that’s just the baseline. For more sensitive use cases, you may need end-to-end encryption (E2EE), stricter access controls, and auditability around how sessions are handled.

A provider’s compliance posture matters just as much as the underlying tech. Look at what standards they meet (e.g., HIPAA, ISO 27001), what’s covered under their responsibility vs yours, and how much control you have over encryption and data handling.

💡Tip: Ask providers how they support audits and ongoing compliance. What controls are built in vs configurable? These details matter because waiting until an audit to figure this out is usually too late.

5. Stress test the insights layer, not just the call quality

Call quality is what users notice in the moment. When a user reports “the video was blurry” or “audio kept cutting out,” your ability to debug quickly depends entirely on the tooling behind the API.

Insights are what help you understand trends, prove SLA compliance, handle billing, and preempt churn. Established video call platforms like Whereby expose both client-side events and server-side telemetry.

You need to see metrics such as bitrate, packet loss, reconnections, and device issues tied to specific sessions or room IDs. Being able to drill into a single call and see exactly what went wrong (network, device, or infrastructure) can turn a multi-day investigation into a quick, actionable fix.

💡Tip: After each session during your evaluation, go to the provider's insights layer. Look into who joined when, what their connection quality was, how many minutes were consumed, and all the data you can get.

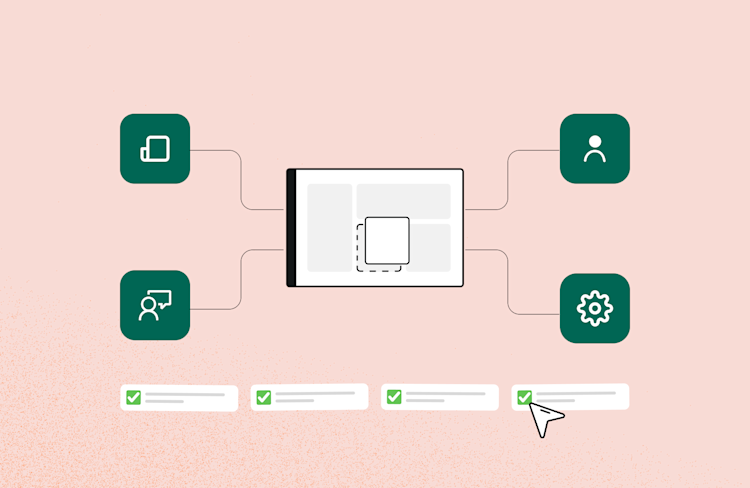

6. Test how deeply you can customize the UI without forking the SDK

Most embedded video APIs give you a binary choice: use their pre-built UI as-is, or build everything from scratch with an SDK. Neither extreme is ideal when you need the video experience to feel native to your product.

Look for APIs that offer granular UI control at the component level. Whereby's web component, for example, exposes individual attributes to toggle specific elements like the bottom toolbar, participant list, screenshare button, logo, timer, chat, and even the self-view without requiring you to rewrite the underlying call logic.

This means you can strip the UI down to just what your users need, and customize it to better fit your brand while still benefiting from a fully managed media layer underneath.

💡Tip: During evaluation, try to replicate your target UI using only the API's built-in customization options. If you have to override styles with hacky CSS selectors or maintain a fork to get the look you want, ensure you have the resources for a deeper customization.

7. Don't overlook the pre-call experience as part of your product

Developers often evaluate video APIs purely on in-call behaviour, but the pre-call device check is where a surprising number of user complaints originate.

If someone joins with the wrong microphone selected or a blocked camera permission, and there's no friction point to catch it, the blame falls on your product, not their browser settings.

A pre-call check can help surface these issues early by testing things like the camera, microphone, speakers, and connection quality. It’s also useful to give users some control, such as the option to skip the check, access help resources, or move through quickly when needed.

A well-designed pre-call flow significantly reduces "the call didn't work" support tickets, and a configurable one means you're not stuck with behaviour that doesn't match your UX.

💡Tip: Simulate a first-time user joining on a device with conflicting audio devices or with camera permissions already denied, and observe what the API returns.

8. Check whether the API is ready for AI-powered workflows

If you're building anything today that involves AI, you need to understand how well your video API can support it both now and in the near future.

This could include things like accessing audio/video streams, generating transcriptions, or enabling automated workflows around meetings. Some platforms are also starting to explore agent-like or programmatic participants, but support here is still evolving across the industry.

Whereby, for example, is actively working in this space with Assistants (currently in closed beta). It’s early, but it points to where things are heading.

In the meantime, look into what’s already in production. Look out for built-in transcription, recording, and other features that can feed into AI workflows without adding a lot of overhead.

💡Tip: Think beyond the current product roadmap. Even if you don't need AI integration today, choosing a platform that already has the architecture for it means you won't be switching providers when that requirement surfaces.

9. Test your webhook architecture before you need it in production

Most video APIs let you embed a call. Far fewer give you a reliable, structured way to react to what happens inside that call from your backend. Webhooks are where video starts to feel like part of your application rather than just an iframe.

Some providers offer server-side webhook events across the full session lifecycle, including joins, leaves, waiting room activity, session start/end, and when recordings or transcriptions are ready. These events often include identifiers and metadata you define when creating sessions, making it possible to map them directly to users and records in your own system.

Security details matter too. Look for webhook implementations that include signing (e.g., HMAC-SHA256) and timestamps to guard against replay attacks, especially if you’re connecting events to billing, compliance logs, or downstream automation.

💡Tip: During evaluation, build a small test harness that listens to webhook events and logs them against your user IDs. If the mapping is clean and events fire reliably, you have a solid foundation for post-call workflows.

10. Don't evaluate mobile as an afterthought

Web-first evaluations are common, but if your product has a mobile component (or might in the future), the mobile integration story needs to be tested separately.

WebRTC behaviour on mobile differs from desktop, and some APIs that work smoothly in a browser become significantly harder to embed correctly inside a native app.

Specifically check camera and microphone permission handling, background audio behaviour, and what happens when a user switches apps mid-call.

These are the edge cases that generate mobile-specific support tickets post-launch, and they're much cheaper to discover during evaluation than after.

💡Tip: Users can also join rooms directly through mobile browsers like Safari and Chrome, which is useful for enabling quick access in a variety of on-the-go use cases. Whereby also supports native mobile integration on iOS and Android via WebView embedding and native SDKs, with additional support for Flutter and React Native.

Wrapping up

Choosing a video call API looks straightforward until it isn’t. These tips focus on the questions to ask before you commit, including architecture, security, mobile support, observability, AI readiness, and long-term scalability.

If you want to apply them in practice, we recommend building a simple proof of concept with Whereby as a starting point.

Our developer-friendly documentation and a free plan that includes 2,000 participant minutes per month allow you to test your requirements in a few days. Try it out here.